Why Michigan Tech Needs Assessment

Assessment is a systematic process for the continuous improvement of student learning that assures educational quality. It enables the university community to identify opportunities to improve courses and curricula, teaching practices, and student life activities, as well as make informed decisions about degree programs.

There is really only one reason to do assessment: to assure that our students are learning at the level we expect for graduates of Michigan Tech. Assessment is also driven by external accountability and our professional accreditation (like ABET, AACSB, SAF) depends on our accreditation by the Higher Learning Commission.

Assessment at Michigan Tech strives to find a balance between the internal drive for educational improvement and external demands for accountability.

What is Assessment?

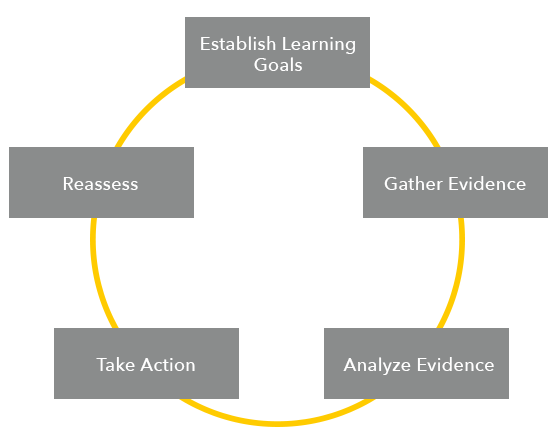

While faculty implicitly engage in continuously improving student learning when they teach classes, evaluate student performance, and respond with changes to their courses, assessment of student learning makes this process explicit. The assessment cycle consists of five iterative steps, which, when this cycle is completed, we say we have “closed the loop.” It can be used to improve any program of study, from courses to degree programs to other institutional outcomes.

While assessment of specific courses is very important, a university assessment program supports students in achieving learning goals that cannot be achieved in a single course. Scaffolded learning builds student competencies by offering instruction and opportunities for practice throughout the learning experience in an intentional way. General education, the degree program, and co-curricular student life programs work together to achieve the learning goals of the university.

Assessment as a Strategy of Inquiry

- Makes explicit a process that is second nature to most faculty

- Focuses on questions that are important to faculty, the program, and the institution

- Assures that students have the chance to achieve the outcomes

- Connects process to outcomes (learning)

- Understands that learning is not just about teaching

- Sets performance targets that are reasonable, logical, and support learning

- Looks for patterns, not data points

- Frames a specific goal

- Documents what worked -- and what still needs to be done -- in a way that accurately reflects how well students are achieving the learning outcomes

- Develops actions designed to facilitate the desired response that leads to shared responsibility for action on multiple levels across the institution

From “Assessment of Learning: Are we selling out or buying in?”, a presentation by Susan R. Hatfield, Ph.D, Visiting Scholar, Higher Learning Commission at the Higher Learning Commission Annual Conference, April 2013.